What is a Signed Language

|

Signed languages are spontaneously-arising natural languages used by Deaf people. Many different unrelated signed languages exist throughout the world--wherever there are communities of Deaf people, there is a signed language (Senghas, Kita, & Özyürek, 2004). The signed language used in the US and parts of Canada is known as American Sign Language (ASL).

Spoken languages may be the dominant communication system used throughout the world, but signed languages are not manual translations of spoken languages used by hearing people or simply conventionalized gestures. Rather, signed languages are autonomous languages that exhibit linguistically complex structures and are fully able to convey abstract concepts. Each sign exhibits a complex hierarchical linguistic structure (Corina & Sandler, 1993). William Stokoe’s ground breaking work in the 1960s launched the formal studies of ASL in the United States. |

|

Sign Language Acquisition

All children come into the world ready to learn any language they are exposed to, spoken or signed (Krentz & Corina, 2008). Deaf children born into a deaf family and exposed to a signed language from birth, acquire sign language at a developmental time course similar to that of hearing children natively acquiring a spoken language (Anderson & Reilly, 2002). They begin producing language at around 12 months and continue to develop in language processing efficiency over the first few years of life (MacDonald et al., submitted). However, over 90% of deaf children are born to hearing parents who do not know a signed language. These deaf children often experience delayed exposure to a signed language. Such delayed exposure can lead to delayed acquisition and lasting deficits in language ability (Mayberry, 2010). |

Cross Modal Priming

fMRI Activation for Deaf Readers

fMRI Activation for Deaf Readers

Neurobiology of Sign Languages

Important neurological research supports behavioral research finding many similarities between spoken and signed languages. Such neurological evidences comes from rare case studies on aphasia and cortical stimulation as well as from a growing body of neuroimaging literature.

Aphasia

Brain injury and stroke often result in aphasia, brain-based difficulties in language production (Broca's aphasia) or language comprehension (Wernicke's aphasia). Both types of aphasia have been documented in deaf signers with damage to the left hemisphere. An example of Broca's aphasia was seen in a deaf signer who could understand ASL fine, but had great difficulty in ASL production (Poizner et al., 1987). Wernicke's aphasia was seen in a deaf signer that struggled to comprehend simple two-step commands and many single signs in a picture pointing task (Corina et al., 1992). While deaf signers with aphasia struggle with sign language comprehension and production, their ability to understand and act out gestures/pantomime remains relatively normal (Corina et al., 1992; Hickok, Love-Geffen, & Klima, 2002; Marshall et al., 2004). Rare aphasic studies like these demonstrate that sign languages are not simple gestural systems, but rather, like spoken language, are formal languages processed in the left hemisphere.

Cortical stimulation mapping

Cortical stimulation mapping (CSM) is a routine preliminary procedure performed to assess brain functioning in epileptic patients. Rare case studies with Deaf epileptic patients have been performed to locate the areas in the brain that are used for sign language perception and production so that those areas can be safely protected during the brain surgery. When a Deaf signer undergoing CSM was asked to sign the names of simple pictures, he showed errors specific errors linked to specific parts of the brain (Broca's area and supramarginal gyrus) when stimulated (Corina et al., 1999).

Neuroimaging

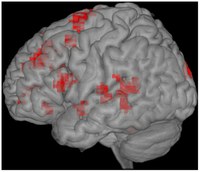

Neuroimaging research (fMRI, EEG, TMS, fNIRS and MEG) on sign language

In summary, the body of research on the neurobiology of sign languages has shown that the neural systems supporting spoken and signed language processing are highly congruent systems, although there are unique differences between the two. In general, what is shared are the the language areas in the left hemisphere, recruited for the processing of all natural languages. Differences seem to reflect modality processing demands. For examples, comprehension of sign language recruits the right hemisphere in early exposed signers (Newman et al., 2002).

Important neurological research supports behavioral research finding many similarities between spoken and signed languages. Such neurological evidences comes from rare case studies on aphasia and cortical stimulation as well as from a growing body of neuroimaging literature.

Aphasia

Brain injury and stroke often result in aphasia, brain-based difficulties in language production (Broca's aphasia) or language comprehension (Wernicke's aphasia). Both types of aphasia have been documented in deaf signers with damage to the left hemisphere. An example of Broca's aphasia was seen in a deaf signer who could understand ASL fine, but had great difficulty in ASL production (Poizner et al., 1987). Wernicke's aphasia was seen in a deaf signer that struggled to comprehend simple two-step commands and many single signs in a picture pointing task (Corina et al., 1992). While deaf signers with aphasia struggle with sign language comprehension and production, their ability to understand and act out gestures/pantomime remains relatively normal (Corina et al., 1992; Hickok, Love-Geffen, & Klima, 2002; Marshall et al., 2004). Rare aphasic studies like these demonstrate that sign languages are not simple gestural systems, but rather, like spoken language, are formal languages processed in the left hemisphere.

Cortical stimulation mapping

Cortical stimulation mapping (CSM) is a routine preliminary procedure performed to assess brain functioning in epileptic patients. Rare case studies with Deaf epileptic patients have been performed to locate the areas in the brain that are used for sign language perception and production so that those areas can be safely protected during the brain surgery. When a Deaf signer undergoing CSM was asked to sign the names of simple pictures, he showed errors specific errors linked to specific parts of the brain (Broca's area and supramarginal gyrus) when stimulated (Corina et al., 1999).

Neuroimaging

Neuroimaging research (fMRI, EEG, TMS, fNIRS and MEG) on sign language

In summary, the body of research on the neurobiology of sign languages has shown that the neural systems supporting spoken and signed language processing are highly congruent systems, although there are unique differences between the two. In general, what is shared are the the language areas in the left hemisphere, recruited for the processing of all natural languages. Differences seem to reflect modality processing demands. For examples, comprehension of sign language recruits the right hemisphere in early exposed signers (Newman et al., 2002).

ASL Lexical Frequency and Age of Acquisition Norm Project

The comparison of signed and spoken language processing provides a powerful avenue for understanding the mechanisms of human language processing. Psycholinguistic studies of language processing show that factors such as frequency of occurrence and age of acquisition are influence the time course of recognition.

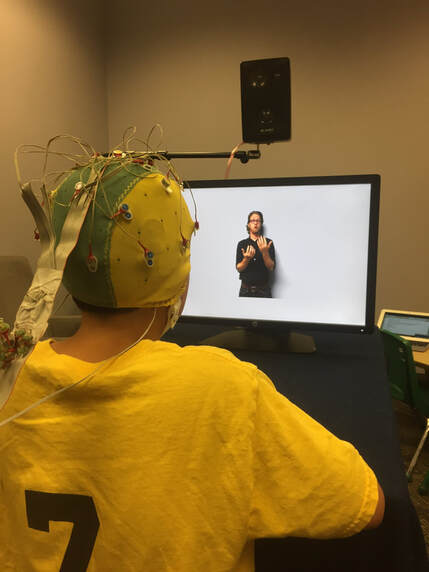

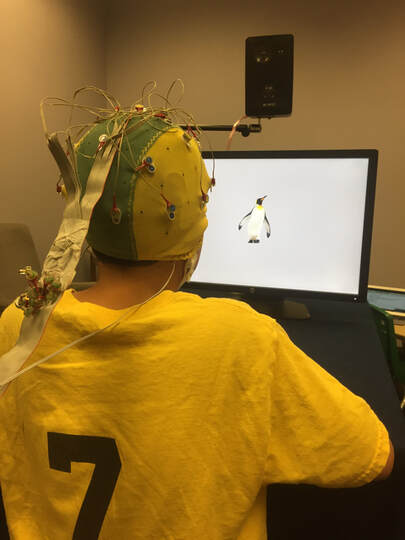

We have collected ratings of ASL frequency and Age of Acquisition for 140 ASL signs using an automated internet survey tool Ensemble (Tomic & Janata, 2007). Our ratings include data from three groups of deaf ASL signers; Native signers (n =36), Early learners of ASL (n = 25) and Late learners of ASL (n = 29).

Each subject viewed and rated approximately 60/140 signs. Subjects were asked to rate how often they used each sign, when they felt they first learned this sign, an English translation of the sign, and whether the participant uses the same variant of the sign. Subjects also provided a confidence rating for the Frequency of Use and Age of Acquisition ratings.

The specific instructions used can be found here.

The results from the survey can be found here.

The comparison of signed and spoken language processing provides a powerful avenue for understanding the mechanisms of human language processing. Psycholinguistic studies of language processing show that factors such as frequency of occurrence and age of acquisition are influence the time course of recognition.

We have collected ratings of ASL frequency and Age of Acquisition for 140 ASL signs using an automated internet survey tool Ensemble (Tomic & Janata, 2007). Our ratings include data from three groups of deaf ASL signers; Native signers (n =36), Early learners of ASL (n = 25) and Late learners of ASL (n = 29).

Each subject viewed and rated approximately 60/140 signs. Subjects were asked to rate how often they used each sign, when they felt they first learned this sign, an English translation of the sign, and whether the participant uses the same variant of the sign. Subjects also provided a confidence rating for the Frequency of Use and Age of Acquisition ratings.

The specific instructions used can be found here.

The results from the survey can be found here.

References:

Anderson, D., & Reilly, J. (2002). The MacArthur communicative development inventory: Normative data for American Sign Language. Journal of Deaf Studies and Deaf Education, 83-106.

Corina, D., & Sandler, W. (1993). On the nature of phonological structure in sign language. Phonology, 10(02), 165-207.

Corina, D. P., & Knapp, H. (2006). Sign language processing and the mirror neuron system. Cortex, 42(4), 529-539.

Corina, D. P., McBurney, S. L., Dodrill, C., Hinshaw, K., Brinkley, J., & Ojemann, G. (1999). Functional roles of Broca's area and SMG: Evidence from cortical stimulation mapping in a deaf signer. Neuroimage, 10(5), 570-581.

Corina, D. P., Poizner, H., Bellugi, U., Feinberg, T., Dowd, D., & O'Grady-Batch, L. (1992). Dissociation between linguistic and nonlinguistic gestural systems: A case for compositionality. Brain and language, 43(3), 414-447.

Krentz, U. C., & Corina, D. P. (2008). Preference for language in early infancy: The human language bias is not speech specific. Developmental Science, 11(1), 1-9.

Mayberry, R. I. (2010). Early Language Acquisition and Adult Language Ability: What Sign Language reveals about the Critical Period for Language. In M. Marschark & P. Spencer (Eds.),Oxford Handbook of Deaf Studies, Language, and Education, Volume 2, 281-291.

Senghas, A., Kita, S., & Özyürek, A. (2004). Children creating core properties of language: Evidence from an emerging sign language in Nicaragua. Science, 305(5691), 1779-1782.

Anderson, D., & Reilly, J. (2002). The MacArthur communicative development inventory: Normative data for American Sign Language. Journal of Deaf Studies and Deaf Education, 83-106.

Corina, D., & Sandler, W. (1993). On the nature of phonological structure in sign language. Phonology, 10(02), 165-207.

Corina, D. P., & Knapp, H. (2006). Sign language processing and the mirror neuron system. Cortex, 42(4), 529-539.

Corina, D. P., McBurney, S. L., Dodrill, C., Hinshaw, K., Brinkley, J., & Ojemann, G. (1999). Functional roles of Broca's area and SMG: Evidence from cortical stimulation mapping in a deaf signer. Neuroimage, 10(5), 570-581.

Corina, D. P., Poizner, H., Bellugi, U., Feinberg, T., Dowd, D., & O'Grady-Batch, L. (1992). Dissociation between linguistic and nonlinguistic gestural systems: A case for compositionality. Brain and language, 43(3), 414-447.

Krentz, U. C., & Corina, D. P. (2008). Preference for language in early infancy: The human language bias is not speech specific. Developmental Science, 11(1), 1-9.

Mayberry, R. I. (2010). Early Language Acquisition and Adult Language Ability: What Sign Language reveals about the Critical Period for Language. In M. Marschark & P. Spencer (Eds.),Oxford Handbook of Deaf Studies, Language, and Education, Volume 2, 281-291.

Senghas, A., Kita, S., & Özyürek, A. (2004). Children creating core properties of language: Evidence from an emerging sign language in Nicaragua. Science, 305(5691), 1779-1782.